DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

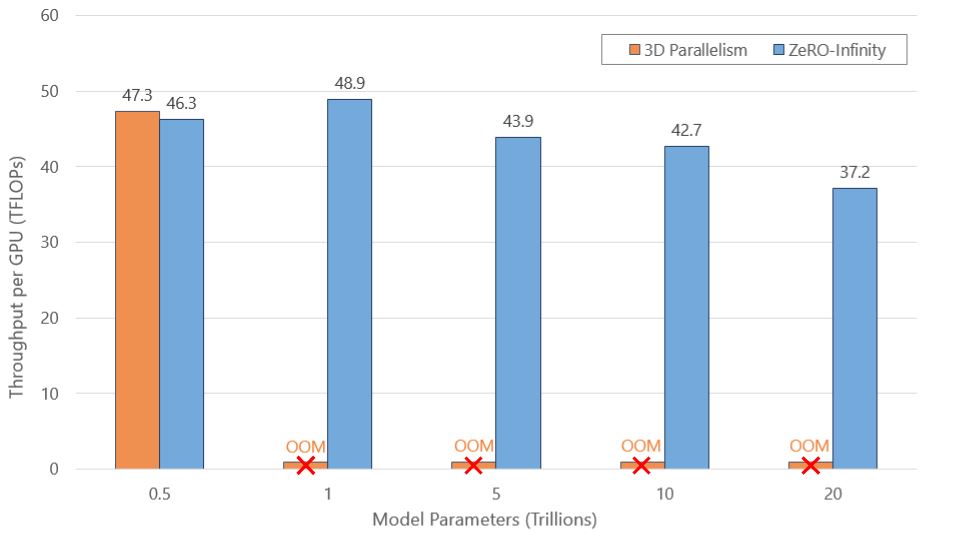

Last month, the DeepSpeed Team announced ZeRO-Infinity, a step forward in training models with tens of trillions of parameters. In addition to creating optimizations for scale, our team strives to introduce features that also improve speed, cost, and usability. As the DeepSpeed optimization library evolves, we are listening to the growing DeepSpeed community to learn […]

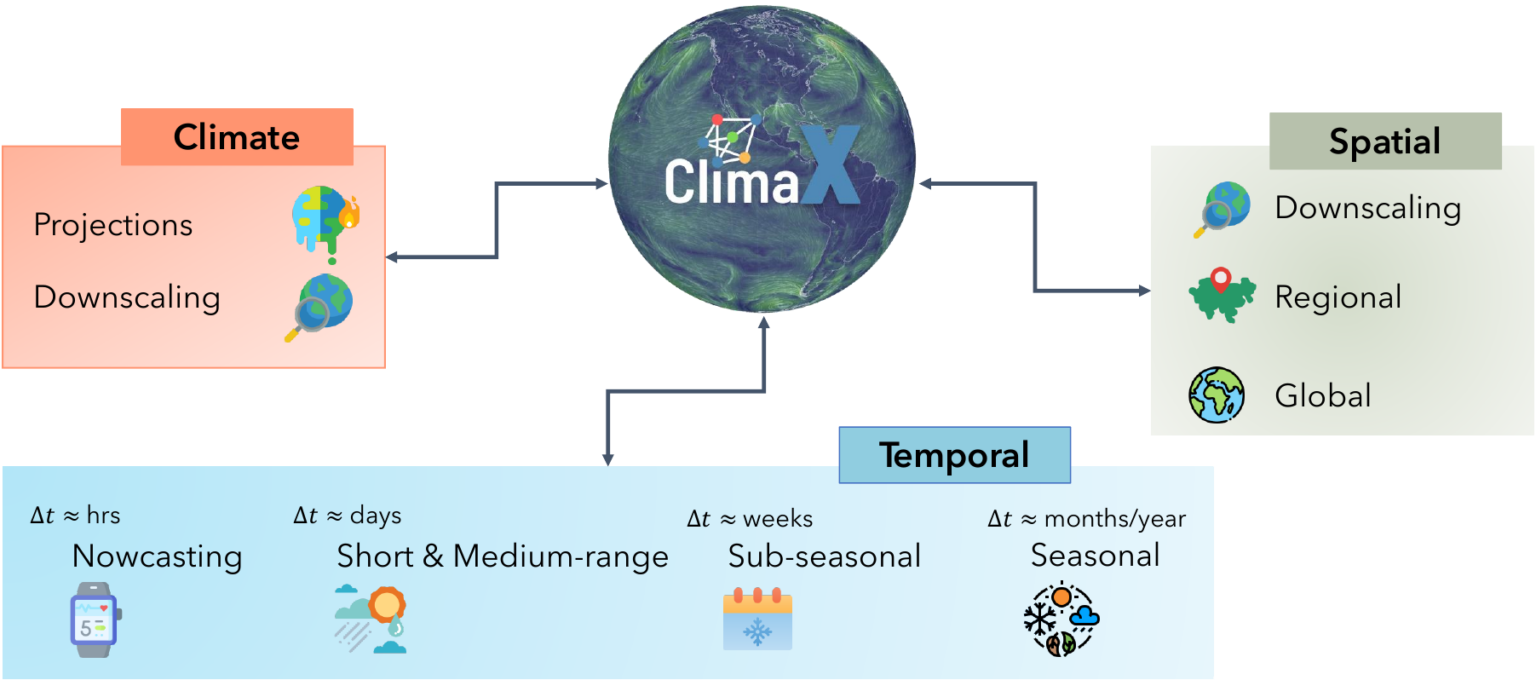

Announcing the DeepSpeed4Science Initiative: Enabling large-scale scientific discovery through sophisticated AI system technologies - Microsoft Research

TensorFlow to PyTorch for SLEAP: Is it Worth it?

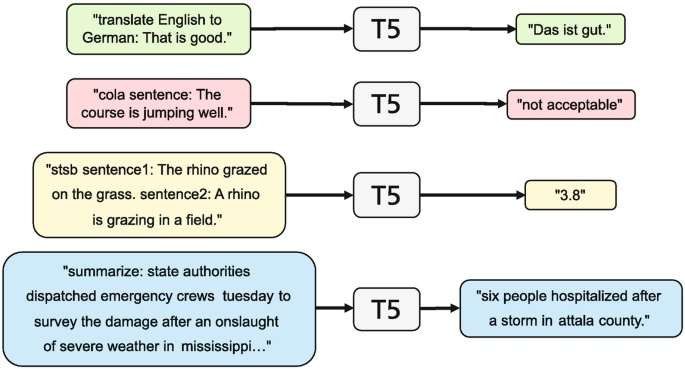

Improving Pre-trained Language Models

DeepSpeed Compression: A composable library for extreme compression and zero-cost quantization - Microsoft Research

Optimization Strategies for Large-Scale DL Training Workloads: Case Study with RN50 on DGX Clusters

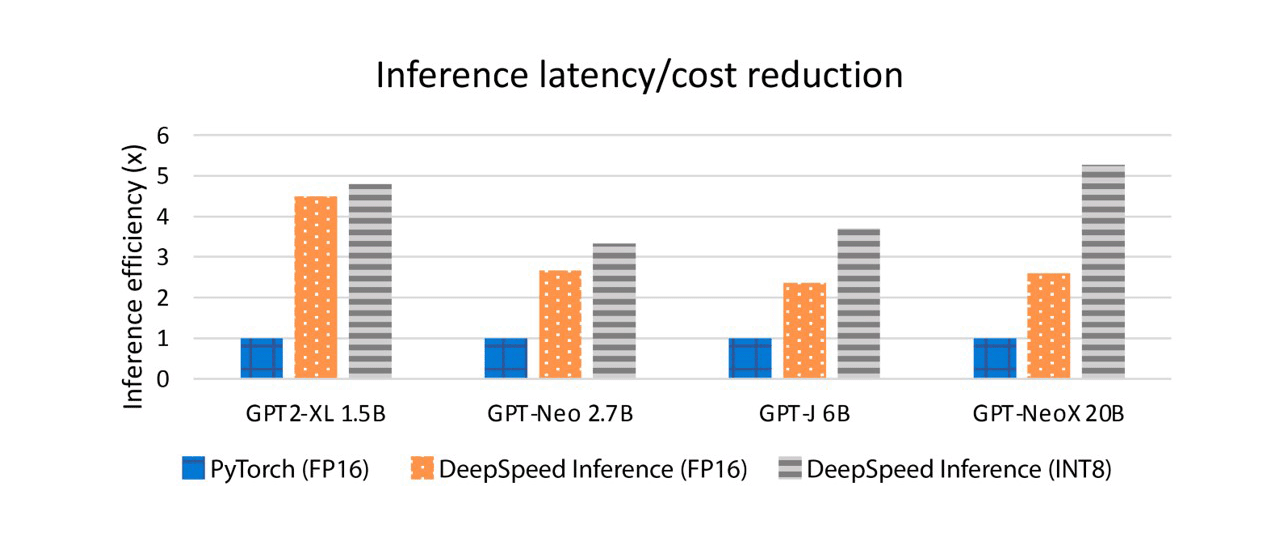

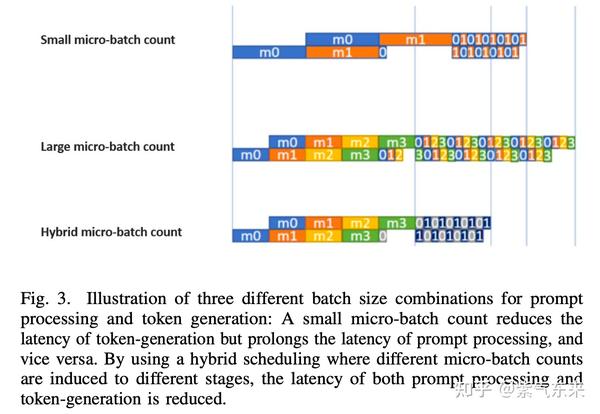

LLM(十二):DeepSpeed Inference 在LLM 推理上的优化探究- 知乎

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

DeepSpeed/README.md at master · microsoft/DeepSpeed · GitHub

the comparison of test and training time of benchmark network

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

Announcing the DeepSpeed4Science Initiative: Enabling large-scale scientific discovery through sophisticated AI system technologies - Microsoft Research

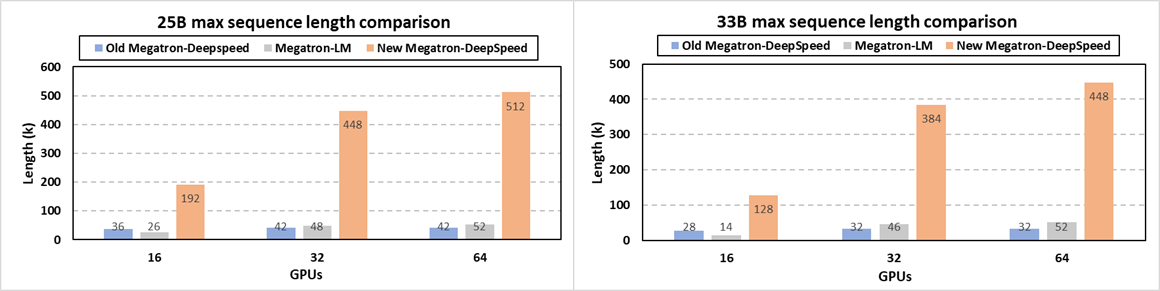

ZeRO-Infinity and DeepSpeed: Unlocking unprecedented model scale for deep learning training - Microsoft Research